Self-Driving Car Vision System

This project demonstrates a robust vision system for self-driving cars. It focuses on three core tasks: plane estimation, lane detection, and object distance estimation using semantic segmentation, object detection, and depth estimation.

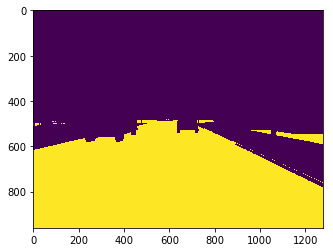

1. Plane Estimation

Using RANSAC, the ground plane is accurately estimated from depth data, which is essential for understanding the road surface and ensuring stable vehicle navigation.

Key Technologies: RANSAC, Depth Estimation, 3D Plane Fitting.

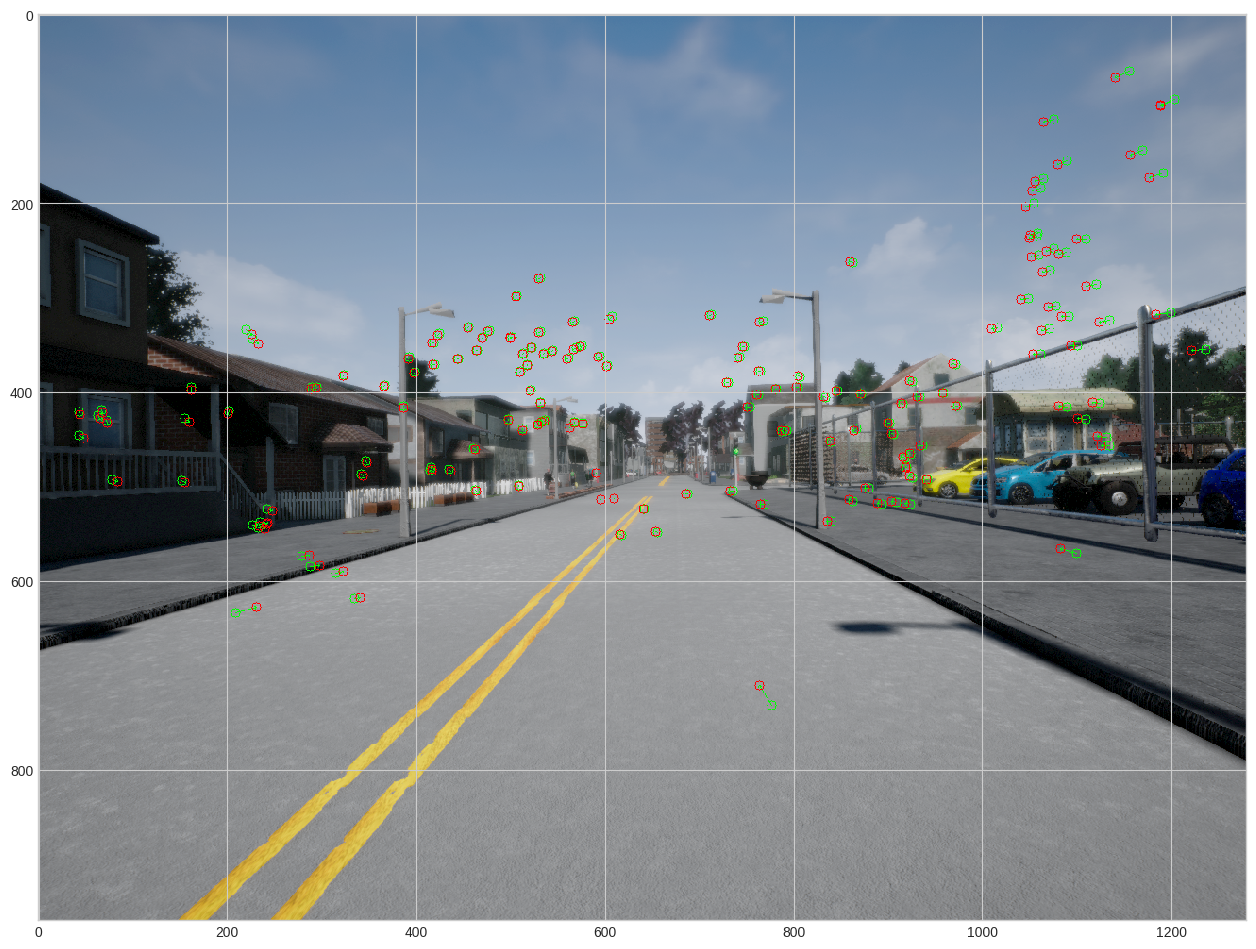

2. Lane Boundary Detection

Implemented lane boundary detection using semantic segmentation, edge detection (Canny), and line estimation (Hough Transform). Redundant lines were merged and horizontal lines filtered out to ensure reliable lane detection.

Key Technologies: OpenCV, Semantic Segmentation, Canny Edge Detection, Hough Transform.

3. Object Distance Estimation

Combined 2D object detection with depth estimation to compute the minimum distance to objects. This ensures accurate proximity measurement for collision avoidance, a critical feature in autonomous driving.

Key Technologies: Object Detection, Depth Estimation, Euclidean Distance Calculation.

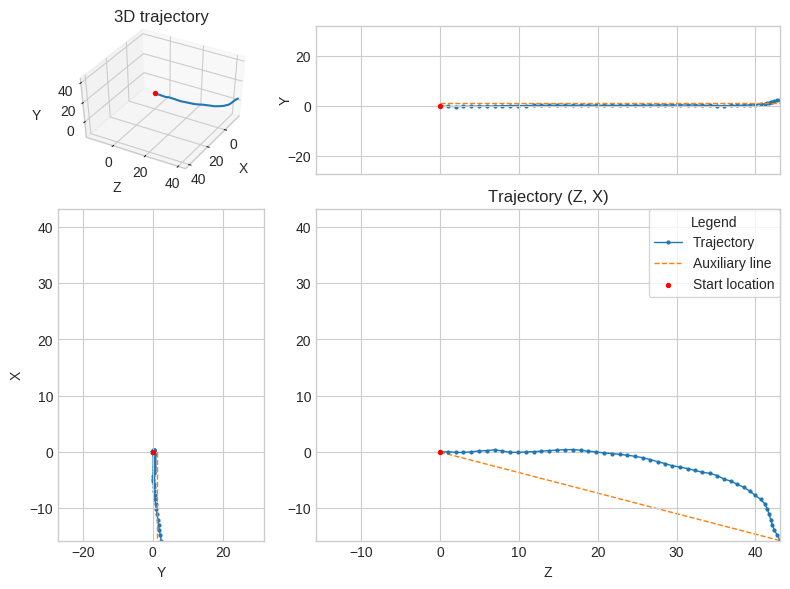

Trajectory Estimation from Visual Data

Developed a pipeline for estimating camera trajectories using 2D image sequences, feature matching, and pose estimation. The system tracks the motion of a camera in 3D space, reconstructing its trajectory over time.

Key Technologies: ORB Feature Extraction, PnP (Perspective-n-Point), Essential Matrix Decomposition, RANSAC.

Conclusion

This self-driving car vision system integrates multiple vision tasks to create a reliable and efficient environment perception system. By combining plane estimation, lane boundary detection, and object distance estimation, the system provides critical data to ensure safe and autonomous navigation.

Key Challenges Addressed: Noisy detections, false positives, real-time efficiency, and robust lane tracking in complex driving environments.